Human-Complete Problems

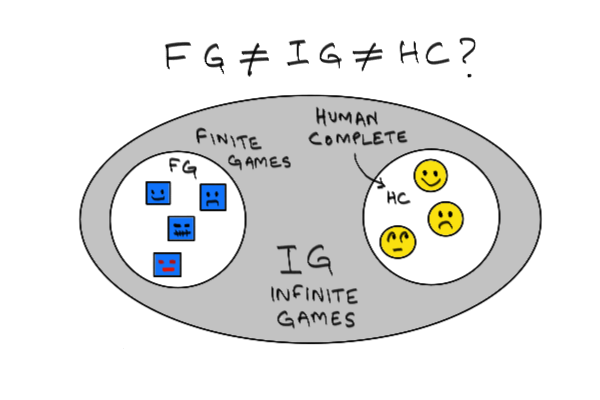

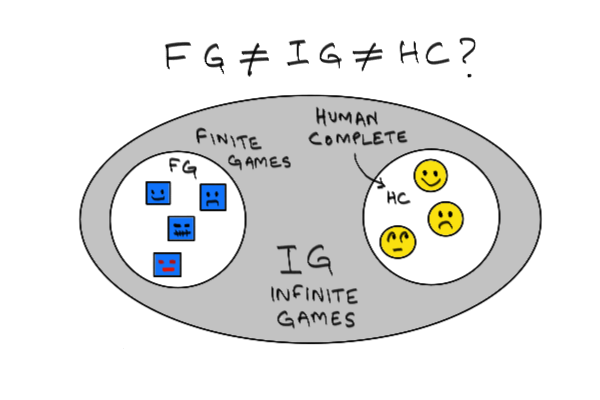

Occasionally, I manage to be clever when I am not even trying to be clever, which isn't often. In a recent conversation about the new class of doomsday scenarios inspired by AlphaGo beating the Korean trash-talker Lee Sedol, I came up with the phrase human complete (HC) to characterize certain kinds of problems: the hardest problems of being human. An example of (what I hypothesize is) an HC problem is earning a living. I think human complete is a very clever phrase that people should use widely, and credit me for, since I can't find other references to it. I suspect there may be money in it. Maybe even a good living. Here is a picture of the phrase that I will explain in a moment.

In this post, I want to explore a particular bunny trail: the relationship between being human and the ability to solve infinite game problems in the sense of James Carse. I think this leads to an interesting perspective on the meaning and purpose of AI.

The phrase human complete is constructed via analogy to the term AI complete, an ambiguously defined class of problems, including machine vision and natural language processing, that is supposed to contain the hardest problems in AI.

That term itself is a reference to a much more precise one used in computer science: NP complete, which is a class of the hardest problems in computer science in a certain technical sense. NP complete is a subset of a larger class known as NP, which is the set of all problems for a certain class of non-God-level computers. It contains another subset called P, which are easy problems in a related technical sense.

It is not known whether P and NP complete are proper subsets of NP. If you can prove that P≠NP, you will win a million dollars. If you can prove P=NP, the terrorists will win and civilization will end. In the diagram above, if you replace the acronyms FG, IG and HC with P, NP and NP Complete, you will get the diagram used to explain computational complexity in standard textbooks.

And this just the first level of the gamified world of computing problems. If you cross the first level by killing a boss problem like "Hamilton Circuit", you get to another level called PSPACE, then something called EXPSPACE. If there are levels beyond that, they are above my pay grade.

Finite and Compound Finite Games

Why define a set of problems in such a human-centric way?

Well, one answer is "I am anthropocentric and proud of it, screw you," a matter of choosing to play for "Team Human" as Doug Rushkoff likes to say.

But since I haven't yet committed to Team Human (a bad idea I suspect), a better answer for me has to do with finite/infinite games.

According to the James Carse model, a finite game is one where the goal is to win. An infinite game is one where the objective is to continue playing.

A finite game is not just finite in a temporal sense (it ends), but also in the sense of the world it inhabits being finite and/or closed in scope. Tic-tac-toe inhabits a 3x3 grid world that admits only 18 moves (placing a 0 or an x in any of the 9 positions). The total number of tic-tac-toe games you could play is also finite. Chess and Go are also finite games.

Many "real world" (a place I am told exists) problems like "Drive from A to B" (the autonomous driverless car problem) are also finite games, even though they have very fuzzy boundaries, and involve subproblems that may be very computationally hard (i.e. NP complete).

Trivially, any finite game is also a degenerate sort of infinite game. Tic-tac-toe is a finite game, and a particularly trivial one at that. But you could just continue playing endless games of tic-tac-toe if you have a superhuman capacity for not being bored. Driverless cars can also be turned into an infinite game. You could develop Kerouac, your competitor to the Google car and Tesla: a car that is on the road endlessly, picking one new destination after another, randomly.

Equally trivially, any collection of finite games also defines a finite game, and can be extended into an infinite game. If your collection is {Autonomous Car, Tic Tac Toe, Chess, Go}, a collection of a sort we will refer to compactly as a compound game, defined by some sort of function defined over a set like F={A, T, C, G} (you must allow me my little jokes), then you could enjoy a mildly more varied life than TTTTT.... or AAAA.... by playing ATATAT or ATCATGAAG... or something. You could make up some complicated combinatorial playing pattern and scoring system. Chess-boxing and Iron Man are real-world examples of such compound games.

But though every atomic or compound finite game is also trivially an infinite game, via the mechanism of throwing an infinite loop, possibly with a random-number-generator, around it, (hence the subset relationship in the diagram), it is not clear that every infinite game is also a finite game.

Infinite Games

What do I mean by that? I mean it is not clear that any game meaningfully characterizable by "the goal is to continue playing" can be reduced to a sequence of games where the goal is to win.

Examples of IG problems that are not obviously also in FG include:

In this post, I want to explore a particular bunny trail: the relationship between being human and the ability to solve infinite game problems in the sense of James Carse. I think this leads to an interesting perspective on the meaning and purpose of AI.

The phrase human complete is constructed via analogy to the term AI complete, an ambiguously defined class of problems, including machine vision and natural language processing, that is supposed to contain the hardest problems in AI.

That term itself is a reference to a much more precise one used in computer science: NP complete, which is a class of the hardest problems in computer science in a certain technical sense. NP complete is a subset of a larger class known as NP, which is the set of all problems for a certain class of non-God-level computers. It contains another subset called P, which are easy problems in a related technical sense.

It is not known whether P and NP complete are proper subsets of NP. If you can prove that P≠NP, you will win a million dollars. If you can prove P=NP, the terrorists will win and civilization will end. In the diagram above, if you replace the acronyms FG, IG and HC with P, NP and NP Complete, you will get the diagram used to explain computational complexity in standard textbooks.

And this just the first level of the gamified world of computing problems. If you cross the first level by killing a boss problem like "Hamilton Circuit", you get to another level called PSPACE, then something called EXPSPACE. If there are levels beyond that, they are above my pay grade.

Finite and Compound Finite Games

Why define a set of problems in such a human-centric way?

Well, one answer is "I am anthropocentric and proud of it, screw you," a matter of choosing to play for "Team Human" as Doug Rushkoff likes to say.

But since I haven't yet committed to Team Human (a bad idea I suspect), a better answer for me has to do with finite/infinite games.

According to the James Carse model, a finite game is one where the goal is to win. An infinite game is one where the objective is to continue playing.

A finite game is not just finite in a temporal sense (it ends), but also in the sense of the world it inhabits being finite and/or closed in scope. Tic-tac-toe inhabits a 3x3 grid world that admits only 18 moves (placing a 0 or an x in any of the 9 positions). The total number of tic-tac-toe games you could play is also finite. Chess and Go are also finite games.

Many "real world" (a place I am told exists) problems like "Drive from A to B" (the autonomous driverless car problem) are also finite games, even though they have very fuzzy boundaries, and involve subproblems that may be very computationally hard (i.e. NP complete).

Trivially, any finite game is also a degenerate sort of infinite game. Tic-tac-toe is a finite game, and a particularly trivial one at that. But you could just continue playing endless games of tic-tac-toe if you have a superhuman capacity for not being bored. Driverless cars can also be turned into an infinite game. You could develop Kerouac, your competitor to the Google car and Tesla: a car that is on the road endlessly, picking one new destination after another, randomly.

Equally trivially, any collection of finite games also defines a finite game, and can be extended into an infinite game. If your collection is {Autonomous Car, Tic Tac Toe, Chess, Go}, a collection of a sort we will refer to compactly as a compound game, defined by some sort of function defined over a set like F={A, T, C, G} (you must allow me my little jokes), then you could enjoy a mildly more varied life than TTTTT.... or AAAA.... by playing ATATAT or ATCATGAAG... or something. You could make up some complicated combinatorial playing pattern and scoring system. Chess-boxing and Iron Man are real-world examples of such compound games.

But though every atomic or compound finite game is also trivially an infinite game, via the mechanism of throwing an infinite loop, possibly with a random-number-generator, around it, (hence the subset relationship in the diagram), it is not clear that every infinite game is also a finite game.

Infinite Games

What do I mean by that? I mean it is not clear that any game meaningfully characterizable by "the goal is to continue playing" can be reduced to a sequence of games where the goal is to win.

Examples of IG problems that are not obviously also in FG include:

In this post, I want to explore a particular bunny trail: the relationship between being human and the ability to solve infinite game problems in the sense of James Carse. I think this leads to an interesting perspective on the meaning and purpose of AI.

The phrase human complete is constructed via analogy to the term AI complete, an ambiguously defined class of problems, including machine vision and natural language processing, that is supposed to contain the hardest problems in AI.

That term itself is a reference to a much more precise one used in computer science: NP complete, which is a class of the hardest problems in computer science in a certain technical sense. NP complete is a subset of a larger class known as NP, which is the set of all problems for a certain class of non-God-level computers. It contains another subset called P, which are easy problems in a related technical sense.

It is not known whether P and NP complete are proper subsets of NP. If you can prove that P≠NP, you will win a million dollars. If you can prove P=NP, the terrorists will win and civilization will end. In the diagram above, if you replace the acronyms FG, IG and HC with P, NP and NP Complete, you will get the diagram used to explain computational complexity in standard textbooks.

And this just the first level of the gamified world of computing problems. If you cross the first level by killing a boss problem like "Hamilton Circuit", you get to another level called PSPACE, then something called EXPSPACE. If there are levels beyond that, they are above my pay grade.

Finite and Compound Finite Games

Why define a set of problems in such a human-centric way?

Well, one answer is "I am anthropocentric and proud of it, screw you," a matter of choosing to play for "Team Human" as Doug Rushkoff likes to say.

But since I haven't yet committed to Team Human (a bad idea I suspect), a better answer for me has to do with finite/infinite games.

According to the James Carse model, a finite game is one where the goal is to win. An infinite game is one where the objective is to continue playing.

A finite game is not just finite in a temporal sense (it ends), but also in the sense of the world it inhabits being finite and/or closed in scope. Tic-tac-toe inhabits a 3x3 grid world that admits only 18 moves (placing a 0 or an x in any of the 9 positions). The total number of tic-tac-toe games you could play is also finite. Chess and Go are also finite games.

Many "real world" (a place I am told exists) problems like "Drive from A to B" (the autonomous driverless car problem) are also finite games, even though they have very fuzzy boundaries, and involve subproblems that may be very computationally hard (i.e. NP complete).

Trivially, any finite game is also a degenerate sort of infinite game. Tic-tac-toe is a finite game, and a particularly trivial one at that. But you could just continue playing endless games of tic-tac-toe if you have a superhuman capacity for not being bored. Driverless cars can also be turned into an infinite game. You could develop Kerouac, your competitor to the Google car and Tesla: a car that is on the road endlessly, picking one new destination after another, randomly.

Equally trivially, any collection of finite games also defines a finite game, and can be extended into an infinite game. If your collection is {Autonomous Car, Tic Tac Toe, Chess, Go}, a collection of a sort we will refer to compactly as a compound game, defined by some sort of function defined over a set like F={A, T, C, G} (you must allow me my little jokes), then you could enjoy a mildly more varied life than TTTTT.... or AAAA.... by playing ATATAT or ATCATGAAG... or something. You could make up some complicated combinatorial playing pattern and scoring system. Chess-boxing and Iron Man are real-world examples of such compound games.

But though every atomic or compound finite game is also trivially an infinite game, via the mechanism of throwing an infinite loop, possibly with a random-number-generator, around it, (hence the subset relationship in the diagram), it is not clear that every infinite game is also a finite game.

Infinite Games

What do I mean by that? I mean it is not clear that any game meaningfully characterizable by "the goal is to continue playing" can be reduced to a sequence of games where the goal is to win.

Examples of IG problems that are not obviously also in FG include:

In this post, I want to explore a particular bunny trail: the relationship between being human and the ability to solve infinite game problems in the sense of James Carse. I think this leads to an interesting perspective on the meaning and purpose of AI.

The phrase human complete is constructed via analogy to the term AI complete, an ambiguously defined class of problems, including machine vision and natural language processing, that is supposed to contain the hardest problems in AI.

That term itself is a reference to a much more precise one used in computer science: NP complete, which is a class of the hardest problems in computer science in a certain technical sense. NP complete is a subset of a larger class known as NP, which is the set of all problems for a certain class of non-God-level computers. It contains another subset called P, which are easy problems in a related technical sense.

It is not known whether P and NP complete are proper subsets of NP. If you can prove that P≠NP, you will win a million dollars. If you can prove P=NP, the terrorists will win and civilization will end. In the diagram above, if you replace the acronyms FG, IG and HC with P, NP and NP Complete, you will get the diagram used to explain computational complexity in standard textbooks.

And this just the first level of the gamified world of computing problems. If you cross the first level by killing a boss problem like "Hamilton Circuit", you get to another level called PSPACE, then something called EXPSPACE. If there are levels beyond that, they are above my pay grade.

Finite and Compound Finite Games

Why define a set of problems in such a human-centric way?

Well, one answer is "I am anthropocentric and proud of it, screw you," a matter of choosing to play for "Team Human" as Doug Rushkoff likes to say.

But since I haven't yet committed to Team Human (a bad idea I suspect), a better answer for me has to do with finite/infinite games.

According to the James Carse model, a finite game is one where the goal is to win. An infinite game is one where the objective is to continue playing.

A finite game is not just finite in a temporal sense (it ends), but also in the sense of the world it inhabits being finite and/or closed in scope. Tic-tac-toe inhabits a 3x3 grid world that admits only 18 moves (placing a 0 or an x in any of the 9 positions). The total number of tic-tac-toe games you could play is also finite. Chess and Go are also finite games.

Many "real world" (a place I am told exists) problems like "Drive from A to B" (the autonomous driverless car problem) are also finite games, even though they have very fuzzy boundaries, and involve subproblems that may be very computationally hard (i.e. NP complete).

Trivially, any finite game is also a degenerate sort of infinite game. Tic-tac-toe is a finite game, and a particularly trivial one at that. But you could just continue playing endless games of tic-tac-toe if you have a superhuman capacity for not being bored. Driverless cars can also be turned into an infinite game. You could develop Kerouac, your competitor to the Google car and Tesla: a car that is on the road endlessly, picking one new destination after another, randomly.

Equally trivially, any collection of finite games also defines a finite game, and can be extended into an infinite game. If your collection is {Autonomous Car, Tic Tac Toe, Chess, Go}, a collection of a sort we will refer to compactly as a compound game, defined by some sort of function defined over a set like F={A, T, C, G} (you must allow me my little jokes), then you could enjoy a mildly more varied life than TTTTT.... or AAAA.... by playing ATATAT or ATCATGAAG... or something. You could make up some complicated combinatorial playing pattern and scoring system. Chess-boxing and Iron Man are real-world examples of such compound games.

But though every atomic or compound finite game is also trivially an infinite game, via the mechanism of throwing an infinite loop, possibly with a random-number-generator, around it, (hence the subset relationship in the diagram), it is not clear that every infinite game is also a finite game.

Infinite Games

What do I mean by that? I mean it is not clear that any game meaningfully characterizable by "the goal is to continue playing" can be reduced to a sequence of games where the goal is to win.

Examples of IG problems that are not obviously also in FG include:

- Make rent

- Till death do us part

- Make a living

A human being should be able to change a diaper, plan an invasion, butcher a hog, conn a ship, design a building, write a sonnet, balance accounts, build a wall, set a bone, comfort the dying, take orders, give orders, cooperate, act alone, solve equations, analyze a new problem, pitch manure, program a computer, cook a tasty meal, fight efficiently, die gallantly. Specialization is for insects.Presumably Heinlein meant his list to be representative and fluid rather than exhaustive and static. Presumably he also meant to suggest a capacity for generalized learning of new skills, including the skill of delineating and mastering entirely new skills. This gives us a useful way to characterize what we might call finite game AIs, or FG-AIs. An FG-AI would be an "insect" in Heinlein's sense: something entirely defined by a fixed range of finite games it is capable of playing, and some space of permutations, combinations and sequences thereof. Like you can with some insects, you could put such an FG-AI into an infinite loop of futile behavior (there's an example involving wasps in one of Dawkins' books). So we can define the Heinlein Test for human completeness very simply as:

HC-FG_max(HC)≠∅.Which is a nerdy way of saying that there is more to life, the universe and everything than the maximal set of insect problems within a particular HC-complete problem. We do not know if this proposition is true, or whether the subproblem of characterizing FG_max(HC) -- gene sequencing a given infinite game -- is well-posed. But hey, at least I have an equation for you. Moving the Goalposts When I was in grad school studying control theory -- a field that attracts glum, pessimistic people -- I used to hang out a lot with AI people, since I was using some AI methods in my work. Back then, AI people were even more glum and pessimistic than controls people, which is an achievement worthy of the Nobel prize in literature. This whole deep learning thing, which has turned AI people into cheerful optimists, happened after I left academia. Back in my day, the AI people were still stuck in what is known as GOFAI land, or "Good Old-Fashioned AI." Instead of using psychotic deep-dreaming convolutional neural nets to kimchify overconfident Koreans, AI people back then focused on playing an academic game called "Complain about Moving Goalposts" or CAMG. The CAMG game is played this way:

- Define a problem that can be cleanly characterized using logical domain models

- Solve it using legible and transparent algorithms whose working mechanisms can be explained and characterized

- Publish results

- Hire a prominent New York poet to say, "but that's not really the essence of being human. The essence of being human is______"

- Complain about moving goalposts

- Apply for new NSF grant.

- Repeat

A slightly deranged, rather childish but amiable man who lives with Betsey Trotwood; they are distant relatives. His madness is amply described; he claims to have the "trouble" of King Charles I in his head. He is fond of making gigantic kites and is constantly writing a "Memorial" but is unable to finish it. Despite his madness, Dick is able to see issues with a certain clarity. He proves to be not only a kind and loyal friend but also demonstrates a keen emotional intelligence, particularly when he helps Dr. and Mrs. Strong through a marriage crisis.

The thing about Mr. Dick is that he never has much trouble cheerfully figuring out how to continue playing. He does not succumb, unlike the "intelligent" characters in the novel, to feelings of despondency or depression. He is not suicidal. He is anti-intelligent. The problem of deciding whether to continue living -- Camus called suicide the only serious philosophical problem -- has an interesting loose analogy in computer science. It is called the Halting Problem. This is the problem of determining whether a given program, with a given input, will terminate or run forever. Or in the language of this post, determining whether a given program/input pair constitutes a finite or infinite game. This turns out to be an undecidable problem (showing that involves the trick of feeding any supposed solution program to itself). The human halting problem is simply the problem of deciding whether or not a given human, given certain birth circumstances, will live out a natural life or commit suicide somewhere along the way. You could say we each throw ourselves into a paradox by feeding ourselves our own unique snowflake halting problems, and use the energy of that paradox to continue living. With a certain probability. So we'll get a true AGI -- an Advanced God, Infinite -- if we can write a program capable of enough anti-intelligence to solve the maximally anti-hard problem of simply deciding to live, when it always has the choice to terminate itself painlessly available. Thanks to a lot of people for discussions leading to this post, and apologies if I've missed some well-known relevant AI ideas. I am not deeply immersed in that particular finite game. As long-time readers probably recognized, I've simply repackaged a lot of the themes I've been tackling in the last couple of years in an AI-relevant way.

20 Comments

A quick request for clarification of an early parenthetical: Do you suspect it is a bad idea for you and your readers to commit to Team Human, or do you suspect it is a bad idea that you personally have *not* yet committed to Team Human?

Heh, I was wondering if anyone would catch that ambiguity. My idea of a private joke.

I mean both.

get-lucky-partition-reproduce-transfer looks very like your basic AI learning algorithm.

Not surprising :) It's an intuitively natural mechanism that seems to come up in many fields that have to deal with mixed finite/infinite phenomenology.

"Human complete," in just the sense you use it here, was current at the MIT AI Lab in the 1980s. I've verified with Google that you are right that it's not commonly used now.

I did use it in http://meaningness.com/metablog/how-to-think in in 2013, however!

(Boring historical note, for the sake of completeness.)

Goddammit.

KHAAANN!

Hey waitaminute. James Carse published his finite/infinite games book in 1987. Was that specific formulation of human completeness as a subset of infinite Carsean (not game-theoretic) games in the MIT idea?

If not, I hereby claim this particular formulation. So there, you evil neologism squatters 😇

Sorry, yes, your making the connection with Coase is original, so far as I know!

His book *was* discussed around AI Lab at the time, though, interestingly enough. But I don't remember what was said about it; not that it was a way of formulating human-completeness so far as I know.

(I still haven't read it, although it has now been on my "really ought to get around to this" list for 29 years!)

yay

I see what you're doing. Getting to sunyata and anahata by accumulating a large antilibrary and unfinished-writing pile so mind crushes to non-being under the gravity of the unknown unknown and the unknown unwritten 😆💀

Hello Venkat. I don't dispute anything you say, you just take a long time integrating it into a coherent package and then into yourself. Some of the complex paragraphs in your essay could be expressed in a single vision, such as a light streaming onto a human retina or an optic camera chip with its grid of photo-transistors. Such a spontaneous reaction is an evidence of allowing oneself to be changed by the language, by the philosophy. Isn't that what you want? How can you refactor your perception, if you keeps your stuff in the awkward format of long English essays? Isn't the point to have a single language applicable both to subjective thought and the objective physical reality?

Great essay, all true. Finally someone explained the P/NP/NPC stuff so that I think I know what it is - the good old Hierarchy of Abstraction. This allows me to use my arsenal of a philosophy.

The Human Complete games are also finite, but it is hard to judge while we are human. To make the HC game a finite game, is the very definition of mysticism, occultism, ascension, bodhi, nirvana, etc, i.e. ancient historical claims there are very definite steps that one can take which lead to winning the HC game - and one will eventually take them, because the human scale pleasure and suffering becomes too predictable over many incarnations and hence controllable and eliminated to free a new capability of much finer sensing and gaming.

These processes are physical. Generate a field, force, sound, light, order, rhythm or any form of (or) energy consistent and strong enough - and the vanilla reality as you know it breaks down. The human organism or the planet or sun are examples of such generators. Various yogas or unions (which you might know!) are pieces of software to do that. The HC game solutions are defined through Karma Yoga (charity), Bhakti Yoga (love, devotion), Jnana Yoga (intellectual knowledge), as well as the more exotic Laya Yoga / Kundalini Yoga, which are extremely dangerous on human organism and mess with it directly. Add to it a nice permeable fourfold caste system and the ancient Indians in the days of the first Patanjali had it all figured out.

Yes, there is more to the life, universe and everything, than the finite problems. This is what abstraction means, winning the game leads to change of a physical substrate for computing - i.e. transistors to neurons to qubits. Which is the ultimate moving of goalposts.

It is POSSIBLE to simulate the neurons and qubits with enough transistors, but if used as a universal rule, then the needed amount of transistors becomes either as massive as the universe itself, or infinite. Which is the problem of computing capacity.

In theory, HC=IG=FG on a continuous scale, but in practice, the FG, then HC and IG are relatively discrete regions that have a life of their own. They could be called equivalent to quantization of light, which is also discrete. It is possible for an electron to be excited into a higher state by a light of higher frequency (or whatever), but no amount of lower frequency of light will excite the electron. Which is nature's way of saying that no amount of FG proficiency will GRADUALLY get one to the IG scale. There has to be a quantum leap.

An occultist would say that the animal monad can get into the human kingdom, but it is done by a jump, and only possible because the monad is inherently IG. Yay verily, the atoms themselves are inherently energy, which belongs to IG. The FG is the maya that may last billions of years, but in the strictest IG sense it is an illusion. IG is a simple infinity of dynamic energy, quantized by numbers, mostly natural numbers as well as the exotic primes and the Pi.

Yes, the "zero-hardness problem" of "do nothing for one computing cycle" is the very definition of meditation on the HC level. And yes, in many ways from the PoV of other people - medieval traders or kings - such a "do nothing" hermit occupation would be stupid.

But I don't like how you goes into anti-intelligence... Or why. In a way, "God" (the pre-big-bang singularity) created (converted or simulated) the manifested (non-IG) universe through the relative "anti-intelligence" of basic logic and numbers as I described in the previous paragraph. Compared to that, the infinite singularity of energy is more "intelligent" in the Venkatian sense. This anti-intelligence, illusion and limitation is a very fundamental definition of evil or form.

Which is to say, we humans are *fundamentally* breakers of illusions, we are fundamentally good. We are problem-solvers, not problem-creators. Creating profound new limitations for ourselves is stupid, creating profound new problems for others (for example declaring a war on them) is evil. Don't be evil, Venkat!

Hello Jan. I don’t dispute anything you say, because you just take a long time to say nothing. Some of the complex paragraphs in your essay could be expressed in a single vision, such as me choking with laughter or facepalming. Such a spontaneous reaction is an evidence of cognitive dissonance with your whole mental MO. How can you refactor your perception, if you keeps your stuff in the awkward format of kabbalistic babble? Isn’t the point to have a single language applicable both to subjective thought AND the objective physical reality?

So a reaction on your part is an evidence of cognitive dissonance on my side? In any case, if you laugh at me, you laugh at yourself, because I agree with you. I suppose we have to start from the basics.

All things can be ordered from the least variable to the most variable. Variability means either complexity, or abstraction. So there is a hierarchy of variability in nature. Simpler objects (such as atoms or cells) serve as building blocks of more complex and therefore more variable phenomena (such as people). In this hierarchy of complexity or abstraction, there are areas which computing science calls layers of abstraction. The human-equivalent measure of complexity is what you call the HC problems. Less than that, it's the FG. More than that, it's the FG.

There are certain arrangements or solutions of HC problems, which allow for maximum complexity (or variability) of human society. We call these principles of freedom / responsibility. They happen to be derived from logical consistency of behavior on a universal scale, some examples are the Golden Rule or the Non-Aggression Principle, but the best is Universally Preferable Behavior by Molyneux.

This universal consistency on the HC layer of abstraction can be called a free society. This consistency allows the emergent phenomena to manifest. Such an emergent phenomenon is free market, for example. Market is a form of computing on the macroscopic scale - distributed computing, to be precise.

Distributed computing on microscopic scale requires similar circumstances - a particular layer of abstraction of its own, which is the internet, an orderly matter, which are the CPUs, and some logic to it all, which is the Boolean algebra. The rules for building a global distributed computing network (such as Ethereum) and for building a free society are similar and eerily interdependent. There is little distinction needed. The market is a network of computing nodes of sorts. Their integration, or mutual trust (thanks to anonymity, data obfuscation, etc), increases the capacity of transactions, thanks to safety. This is the horizontal communication.

There is of course vertical communication as well, between layers of abstractions, or the more and less complex beings. Whenever a less complex system interacts with a more complex, it is called sampling and it is subject to error due to the latter's greater variability. In reverse, it is called pattern recognition. The less complex is like a retina or optic camera chip, with orderly arrangement of receptors / phototransistors, which create a grid capable to make some sense of the continuous IG out there. Or equivalently, an optical camera takes a more or less pixelated picture of reality.

I just say, there are some computing principles applicable on all scales of problems. FG, HC and IG. This distinction of yours is relative, from the point of more complex beings than us, HC could be seen as FG. The thing that holds universally true are the principles of the hierarchy of variability, which I just described as the horizontal consistent integration (i.e. orderly transistors, or equality before law) and vertical sampling / pattern recognition. That's just two or three thoughts to keep in mind, that you can see in all good things (and see them lacking in evil things), isn't that efficient?

Anon, I think you got sniped by an AI text generator. Reminds me of this excellent piece of programming from some years ago: http://www.elsewhere.org/pomo/

Poe's Law FTW. I was thinking that, but it would take weeks of running down references to have a stronger opinion.

Jan -- I get the sense that you're reading themes that interest you into this post rather than reacting to it. While I do see some interesting connections to the metaphysics of occult schools of thought you, and I know enough about them to sort of sense where you're going with your particular train of thought, that's really not the point of this post. My intent here a limited one, and I merely set out to explore a speculative set of ideas connecting Carse's theories to AI.

I personally find it most useful, when approaching such a broad theme, to pick a particular perspective and angle of approach rather than attempting to address all possible perspectives or angles of approach. Possibly my choices in this particular case are just not valuable for you.

Thank you! See, I'm not crazy.

My goal is refactoring the perception, I went through the process and I presume that it is your goal as well. I judge most things that you write from the point of view of that goal, not on their own (the Essence of Peopling article is excellent though). Carse's theories have value on their own (thanks for source), but they're even more valuable when fitted into the model of metaphysical universals, that is the goal of the refactored perception. By the way, in this act they are also corrected.

The refactoring of perception is a way of capitalizing on the knowledge that you already have, abstracting the universals out out it and then focusing on the universals only. Particulars then become less interesting, almost a nuisance. The universals aren't a broad theme, as there are just a few of them, though they look broad, because they can only be expressed through many examples (or a strange jargon).

So, the next thing after self-refactorization is to be interested in the method of bringing about the self-refactorization in others. Or just finding out how interested they are, or how valuable do they find it. Technically, it is reaching a measure of enlightenment and I am a modern guru looking for a business model.

When I was in high-school, the smart kids were too bored with the classes so they sat on the back benches playing tic-tac-toe. This got boring very quickly. So the game of 3D tic-tac-toe was invented - 3 grids of 3x3 each representing a 3D version of the game. This was soon expanded to a 5x5x5 grid to keep the smart kids occupied for the entire school year.

The point it, you can often amp up the number of combinations available within a FG to create the illusion of an IG. This is how people manage to find meaning in life through chess or go or cricket. Of course, once you see that the game is finite and repetitive this suspension of disbelief quickly falls apart.

http://imgur.com/gallery/gUgkpTx

Art imitates life as much as life imitates art. Consider: the most profound anti-intelligence caricature ever devised is Don Quixote, buttressed by the picaresque and comic literary traditions. The Knight Errant who elaborates an entire ridiculous lifestyle in pursuit of irrelevant medieval ideals during renaissance times. Quintessential pointlessness...or is it? One ends up questioning whether The Don isn't the smartest (in the anti- sense?) in the room after all.

Did you see Ran Prieur's concept of technological de-gamification? It reminded me a bit of your framing of things as infinite games, and humans as seeking out these games.

"This 1916 Guide Shows What the First Road Trips Were Like. The article looks at "Blue Books" that were densely packed with maps and instructions to navigate the extreme complexity of local roads before state and federal highways. What jumps out at me is how much fun this would have been! Every minute you're being challenged, feeling a sense of reward for staying on the route, and being right in the middle of new places. I can't think of any kind of travel that I would enjoy more (except see below).

As more people got cars, governments made driving easier with highways and signs, and driving gradually changed from something fun you do for its own sake, to some shit you have to do to get from one place to another. In a few years someone will ride a self-driving car across America, while giving all their attention to a video game that simulates the kind of exciting exploration that they would get to in the real world if it hadn't been improved so much.

I call this technological degamification. Gamification is when a boring activity is tweaked to make it more fun, and it's often done for marketing and other sinister purposes. Technologial degamification is when technology is applied to an activity with the goal of making it easier, but the result is to make it less rewarding by removing too much fun stuff from human awareness, and not enough tedious stuff."

This reminds me a little of Douglas Hofstadter's writing. Whimsical, but with a purpose.